The term hackathon can evoke a variety of sentiments depending on a software developer’s background. It may remind you of that time you worked your butt off for an entire weekend at a contest, just to earn a second place and a T-shirt (best-case scenario). It may remind you of an event where you met many friends and built some of the wildest project ideas you ever had.

However they end up happening, there is an aspect of extraordinary focus which is essential to Hackathons. This is exactly what makes them so thrilling, and what allows for the production of unimaginable results. For a few years now, we at Up Learn have been experimenting with regular Hackathons and how they can improve team productivity, work satisfaction and product quality.

Hackathons at Up Learn

Once every two months, we will throw a Hackathon at Up Learn. Project ideas are stored in a spreadsheet - whoever wishes to propose a new one can just pop it in at any time. To prepare for the event, we have a 1h long meeting the previous week, where we’ll go over the list of ideas and set up teams, each team with its own project to work on. The event lasts two consecutive weekdays (usually Thursday and Friday) and includes all interested developers.

During those two days, product work is essentially paused, with the exception of urgent bugs. We make sure this is public knowledge and take this into consideration during product delivery estimation. Finally, the following week we’ll run a retrospective meeting to reflect on the event as well as to share our results with each other and discuss next steps for each project.

This seems to have been working well for us, with a variety of projects successfully incorporated into our product and workflow. To this date, we haven’t experienced a situation which forced us to cancel a hackathon, which also is a huge validation by itself.

Past projects

To start off, let’s look over some projects from past hackathons!

Gherkin-based test plan generation

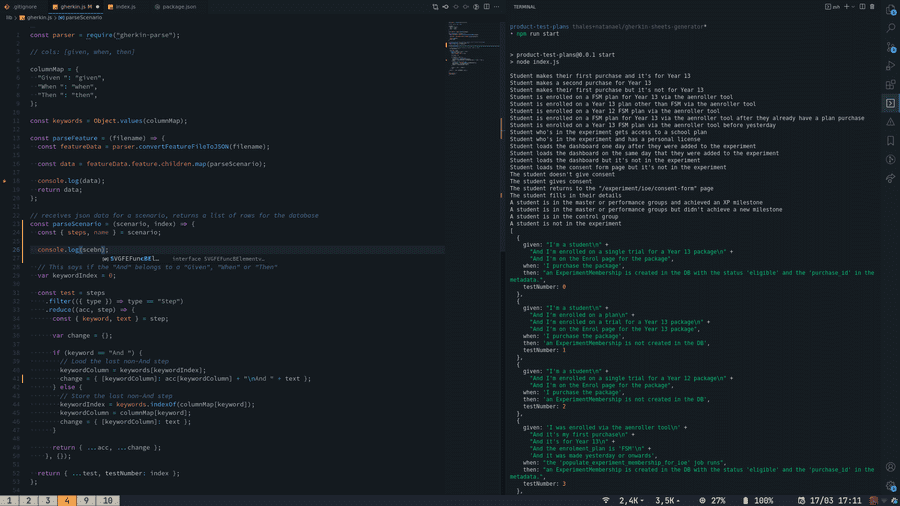

When developing a new feature at Up Learn, we’ll usually begin with a test plan, which will be used to QA the feature once complete. These are spreadsheets describing a list of scenarios, actions and desired outcomes. At some point, we realized that by using a structured test language such as Gherkin, we could describe the same checklist as code in a repository, allowing for code review and all the good stuff that comes from versioning code.

Combining Gitlab CI with a few node libraries (js-git, gherkin parser and googleapis), we came up with a solution that checks for new .gherkin files on a features folder with each successful merge, parses them and creates a new, ready-to-go test plan spreadsheet on google drive. This test plan generator is now used as a starting point for all new features, improving developer experience and product processes.

Parsin a gherkin file to generate output

Up Learn Wrapped

Remotion is a tool for generating videos using React, the same framework we use for our front-end. This allows you create animations with a consistent visual identity, since the same website CSS and components can be reused. This means automated, customized content for our users!

We came up with the idea of creating personal video retrospectives, summarizing a student’s progress over their past year on the platform. Aside from being a fun experiment and feature, this could have a positive impact on our retention and engagement. Below is the result of this hackathon project.

Code Editor for a DSL (UpQL)

Up Learn relies heavily on quizzes and exam styles questions, both as learning and progress assessment tools. Building a variety of quiz types required us to develop a DSL for defining exams, named UpQL. Since UpQL is meant to be parsed and compiled, we realized the experience for our content team would be much better if they were provided with common coding tools, a basic linter, syntax highlighting and error detection. We implemented the editor using monaco, including a custom syntax and communication with our API for UpQL code validation.

LaTeX Toolchain Migration: Mathquill to Mathlive

This is an example of a library migration task force. Mathquill and Mathlive are LaTeX support libraries, both of which implement input and preview generation. At some point it became clear LaTeX customization was an important requirement, as we needed to extend our set of math symbols. Doing this in Mathquill is challenging, so we considered migrating to an alternative.

On top of that, Mathlive offers good quality front-end components for input and, in our experience, results in a more maintainable codebase, and that made us decide to adopt Mathlive as a hackathon project. The result was a proof-of-concept.

End-to-End Phoenix View Testing

One of our biggest challenges for testing our codebase is our admin dashboard. Our application is built using Phoenix, a web framework for Elixir, and our admin front-end is built using Phoenix Views. Because of this, tests cannot be automated with JS tools used in our front-end (such as jest), while also not being completely testable with vanilla ExUnit (as done in our API code).

For this project, we implemented a proof-of-concept test suite for an admin dashboard page using wallaby, an E2E testing framework for Phoenix. This allows us to ensure that changes in our API won’t unintentionally alter or break any features for our admin dashboard without having to check manually.

Advantages of Hackathons for an Engineering Team

Hackathons are intimately related to innovation. Regardless of the format, hackathons will generally revolve around a challenge or problem statement with no prescribed solution. More than merely acceptable, new and possibly unusual solutions are in fact desirable and valuable outcomes, and so are failures since they will inevitably bring about some new insight into the problem.

The first major benefit we’ve seen is the opportunity to learn (or practice) a new tool, language or technique. Arguably the main point of interest for many hackathon models, this allows us the opportunity to rethink coding habits and standards, which ultimately helps us grow as developers and as a team.

Aside from that, running regular hackathons also provides us with a flood of innovative ideas. As engineers, we’re trained to think in terms of problems and solutions. This applies not only to the job at hand, but also to our workflow, tools and team practices. These issues have an impact on our daily satisfaction with work: they’ll typically make you go “oh, I wish that worked differently!” or “I wish I didn’t have to do this every single time”, but we never seem to afford the time to actually tackle some of them. When given the opportunity to think about what changes we would make, it’s almost impossible to not come up with ideas.

In our experience, hackathon projects usually end up being either of (in order of general excitement):

- Proof-of-concept product improvements involving challenges which require considerable technical effort, exploration or major breakthrough. These improvements usually end up postponed in the interest of delivering features

- Automation of development processes which take valuable time from the team

- Technical debt task forces.

Each of these is by itself a major gain for the team. This perspective of creating or changing something meaningful is naturally thrilling. It keeps the team always hyped for the next hackathon. In other words, team engagement and motivation is another major benefit of running them as a team practice.

Finally, sometimes things just don’t work. In these occasions, aside from the obvious frustration of a project, we can also learn from the experience as a team, and thus still consider this to be a benefit. When a project idea proves not to be a convincing engineering solution, it can be documented such that future similar projects may explore different paths. If the solution is promising but could not be finished - indeed the most common scenario - we’ll usually re-scope it as part of our regular sprints, bringing the project to a conclusion. This exposes clearly the value proposition and effort requirement in a way that makes expectations simpler to manage.

Challenges and Downsides

As a team practice, periodic hackathons do come with their own set of organizational challenges. The most obvious one being the lower engineering availability during the event. This hasn’t been an issue in our experience but it does require planning ahead and alignment to manage product expectations. On top of the duration of the event, we also have to account for two meetings (planning and retrospective) and a generally lower team output the following week - this drop has been observed in basically every hackathon instance so far. If your team is distributed across multiple time zones, things become even trickier: most of the work during a hackathon is expected to be done in pairs, which is harder to guarantee across large time gaps.

There are, however, more subtle challenges involved in running periodic hackathons successfully. For instance, it’s not easy to create an environment where failures are also valuable. This requires lifting any expectations away from the effort invested during the event - in other words, one can’t count on the successful results of a hackathon, since the outcome is unknown. Setting expectations over these results will defeat its purpose of innovation and reduce everything to an engineering problem.

The model we follow requires 1 day per month of exclusive dedication. This sums up to around 5% total engineering time. Lifting any expectations over 5% of engineering output is tricky to negotiate, even for the long-term benefits of the team and product. Up Learn leadership is on board with this, but this makes starting hackathons as a regular practice in a new company particularly challenging.

Finally, a huge component for its success is novelty, or out-of-the-ordinariness. A hackathon every week would quickly become routine, it wouldn’t feel special and people would quickly start feeling tempted to miss it for the sake of getting other kinds of work done, so finding a balance between regularity and novelty is essential.